I closed my eyes in the year 2025 and opened them again a century later. A disembodied voice greeted me warmly, “Will Hill, welcome to the year 2125!” I stood unsteadily to my feet and rubbed the effects of time travel from my face while two android techs examined me. I looked around at the future that was now my present; I could not help feeling like I was in an episode of “The Jetsons” cartoon.

I was here because I had volunteered to participate in a one-year study of future prison systems for several reasons: first, to get away from prison life in 2025, and secondly, to contribute the results of my experience in the future towards the development of a better prison system in my era, and more importantly, to get early parole. Plus, I was more than just a little curious about what was in store for prisoners.

“Hello Will Hill, I am CO-182. I will be your guide during your stay,” said a blue and gray robot that resembled C-3PO from the Star Wars movies as it quickly strapped an instrument to my wrist. The red light on the device turned green.

“What is…?”

“This is a biometric sensor. It monitors everything you do and say as well as your blood pressure, respiration, and brain waves.”

“Why do I need…?”

“You do not,” she said, “we do.”

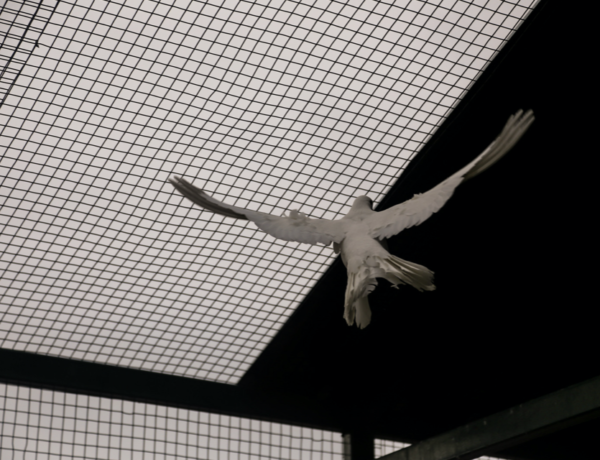

I was becoming a little peeved with how it answered me before I finished talking; I guess some things never change. The first thing I noticed about my new birdcage was all the cameras located everywhere I could see.

CO-182 continued, “The sensor on your wrist and the cameras around the unit convert biological processes into electronic information that is analyzed and stored in our databases. Given enough data, our processors can determine your desires, decisions and opinions, which will help us to know exactly who you are.” Its electronic eyes stared unblinkingly at me. “For example, if you look at another prisoner and our cameras and sensor detect a higher than usual blood pressure and increased activity in the amygdala, symptoms consistent with feelings of anger, you will be placed in corrective housing within minutes. This helps the institution to identify and neutralize potential problems in advance thereby lessening the need for human officers.”

I looked around and realized that I had not seen any human officers since I arrived. The only flesh and blood bodies were other prisoners, all accompanied by their own robots. It shocked me how this software could determine human emotions based solely on eye movements and facial tics.

As if reading my thoughts, CO-182 led me through a common area and said, “The televisions are equipped with software designed to identify the shows that make you laugh, sad, bored or angry. Therefore, the next time you watch the television, only the shows that bring you pleasure will be available for viewing. How does that make you feel,” it asked but did not wait for a response. Instead, it silently walked on with me following closely and listening intently. “Feelings are only a rapid process of calculations occurring far below your threshold of awareness. You don’t feel the millions of neurons in your brain computing probabilities of every situation you face each day in prison. Therefore, you erroneously believe that your fears and opinions are the result of the previously believed, but long since disproved, concept of ‘free will.’”

“Wait so you’re saying prisoner have no…”

“Free will? Precisely,” she rudely interjected. “” No one does actually. This is how I am able to know you better than you know yourself – it’s called Biochemical Algorithm Technology transcribed into Big Data Infotech, which is impossible to manipulate. Through trial and error, we have learned that prisoners are notorious for being manipulative. If you try to force a laugh, for example, the biometric sensors will detect it because the brain uses different neural pathways and synapses in that instance than it does during a natural laugh.”

We stop at the chow line where another android that was identical looking to C

O-182 handed me a tray.

“This feels pretty in…,” I start to say.

“Invasive?” the automaton interrupted. “We hear that complaint from all the people that come from your era. However, we are only identifying your deepest fears, hatreds, and cravings in order to help you live a more fulfilling life. The better we understand the biochemical mechanisms that underpin human emotions, desires, and decision-making processes, the better our computers can analyze prisoners past behavior and predict their future decisions.

I looked at all of the options on the food line. Each time my eyes landed on a dish that I liked the accompanying camera’s light turned green and the dish was placed on my tray. Sitting down to eat I felt queasy. I was learning that prison in 2125 was basically a human hack center that denied free will by watching billions of neurons calculate all the probabilities in a split-second and predetermining our decisions before human emotion even had a chance to reach a conclusion. “Human intuition is only pattern recognition, an algorithm!”

DO-182 said ecstatically. Her voice dropped. “Your biometric sensor detects dislike. Perhaps we should change topics?”

I moved the peas around on my tray, “Uh, what does parole look like in 2125?”

“Parole exists in 2125,” the bot answered then immediately changed the subject. “Members of this population choose on which pod they want to live. Each pod is designed for a specific lifestyle. Some of the available pods are education, gamers, fitness, or religious to name only a few.”

After leaving the cafeteria, we walked down a pristine hallway lined on either side by the living locations. I peeked into the first pod and observed prisoners eating healthy snacks, lifting weights, and doing yoga. “This is obviously the…”

“Fitness pod,” CO-182 said. “These prisoners are dedicated to physical fitness and have individualized diets and workout plans designed for their particular conditioning goals.”

The occupants on the gamers pod were playing board and computer games while the residents of the education pod immersed themselves in studying various subjects such as philosophy and ancient Greek. The religious pod was dedicated to scripture reading and prayer.

“No prisoner works a job,” DO-182 said. “Most of the prisoners from your era approve of that. We wish for them to live a contented life full of meaningful pursuits. Our algorithms report higher levels of life satisfaction, which leads to the conclusion that their quest for meaning and community eclipses their desire for parole.”

“I do NOT believe that!” I exclaim vehemently.

“Of course you don’t believe it, Will Hill,” CO-182 said in a patronizing voice. “That is because you have not learned that human happiness depends less on objective conditions and more on your own expectations. However, humans’ expectations adapt to the conditions. When things improve, expectations soar so even dramatic improvements such as release on parole, might leave you as dissatisfied as before. Your sensor detects anger. Let’s change the subject and talk about how our version of parole operates. During your stay, we gather information about you based on your actions. That data is then coupled with statistics about recidivism and millions of other prisoners to be analyzed by the Parole Algorithm Board, not by a group of emotional and illogical humans. When you are eligible, you will stand before a computer that scans your biochemical data history. You will ask, ‘Parole Algorithm Board, based on your advanced knowledge about my habits, rehabilitation, views, and level of remorse, what is the best decision for me?’ It will answer with either ‘Granted’ or ‘Denied’ all in the same day.”

“So, a soulless collection of electrodes, receptors, and wires will decide if it feels that I should be released with no human input?” I ask incredulously.

CO-182 shakes its robotic head, “Computer algorithms have no feelings, Will Hill. No emotions. No gut instincts. It’s all based on calculations and probabilities of success as well as ethical guidelines that were coded based on precise data and statistics.”

Coded? I thought deeply about this strangely invasive yet impersonal prison system with no human overseers. Basically, a digital dictatorship. It occurred to me that since humans came first, whoever programmed this system had to have included their own subconscious biases into the software. Was this person a liberal or a conservative? What were their views on prison reform? Did they view the mission of prisons as rehabilitation or retribution?

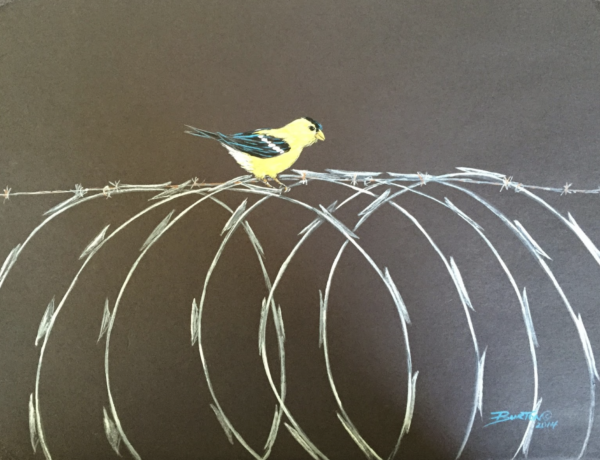

This was too much. When I left my comfortable, if frustrating, existence in 2025, I never thought I would prefer that version of prison to the future one. I missed the uncertainty of human compassion, empathy, and the ability to think for myself. A time where we are not constantly spied on, where there is no digital algorithm shaping my desires and choices. A place where free will is shaped by the interplay of inner forces that no one outside of me can see. Where I enjoy controlling my inner dialogue and outside entities do not understand how I make my decisions. Back where people use their feelings and emotions to solve problems – not algorithms.

I thought about the long, hard year I had to look forward to – if I even managed to make it through the year – would I really want this to be the prison of the future? I tried to ask a couple of passing prisoners how much longer they had left on their year before the promised release, but they quickly averted their eyes and avoided answering the question. I recognized a couple of my friends, who had joined the study years ago, that I thought were already at home after successfully completing the program, but here they were.

Looking at my guide, CO-182, an example of artificial intelligence at its very best, a machine programmed to answer any question even before it was asked.

“I want to go back.” I said defiantly. “How do I get back to the year 2025?” The automaton blinked mechanically for the first time as if surprised by my statement, and for once, CO-182 did not interrupt me.

No Comments